Multi AngLe Imaging Bidirectional Reflectance Distribution Function sUAS (MALIBU)

Multi AngLe Imaging Bidirectional Reflectance Distribution Function sUAS (MALIBU)

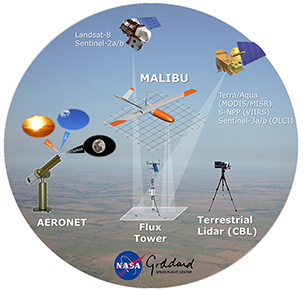

MALIBU is a new demonstration instrument recently deployed on a prototype small Unmanned Aircraft System (s-UAS) that is part of a series of pathfinder missions funded under NASA's Internal Research and Development (IRAD) Program. MALIBU is part of a major and ongoing investment by NASA GSFC to develop enhanced multi-angular remote sensing techniques using small Unmanned Aircraft System (s-UAS) platforms.

The current MALIBU instrument package includes two separate multispectral imagers oriented at different viewing geometries (i.e., port and starboard sides) to capture vegetation optical properties and structural characteristics. This is achieved by analyzing the surface reflectance anisotropy signal (i.e., BRDF shape) obtained when combining surface reflectances from different view-illumination angles and spectral channels. The Tetracam package carries two integrated Multiple Camera Array (MCA) multispectral imaging systems, an Incident Light Sensor (ILS), and a nagivation system. The ILS is used to capture downwelling radiation at wavelengths that are identical to the upwelling reflected radiation monitored by MALIBU's Tetracam channels. The navigation system is made up of a suite of independent navigation units to ensure per-pixel geolocation accuracy of < 2 cm (using two VN200 IMU's, 2 GPS modules, and a field-of-view geotagging system).

Camera

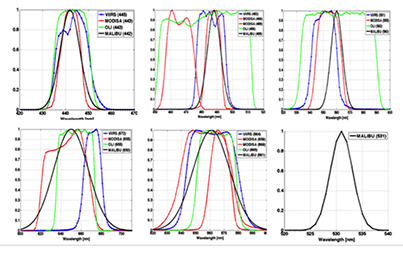

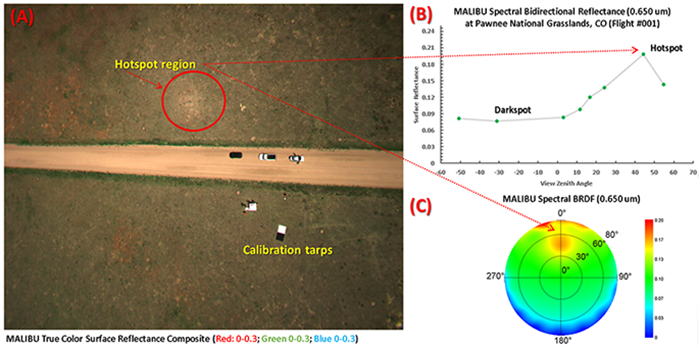

The center wavelengths of the six MALIBU channels are: 442, 488, 531, 560, 650, and 861 nm, corresponding to the bandpasses of several satellite sensors (e.g., MODIS, Landsat-8 OLI, and VIIRS). Click on image for larger version.

The primary (port side) Tetracam camera has five channels and the incident light sensor, while the secondary (starboard side) camera has six channels. When combined, the cameras operate as a single sensor suite with a combined field-of-view of 117.2 degrees. The MALIBU channels were specifically chosen to cover the relative spectral response (RSR) of multiple satellite land sensors; such as the Landsat-8 OLI, the Sentinel-2 MSI, both Terra and Aqua MODIS, Terra MISR, Suomi-NPP/JPSS VIIRS, and Sentinel-3 OLCI.

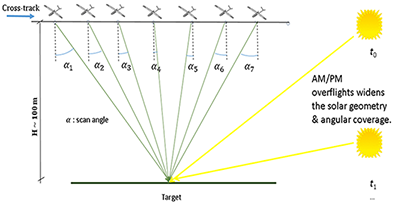

Dual Tetracam cameras (with overlapping swaths) are mounted on the platform across-track. Overlapping scenes along-track provide multi-angular retrievals. By deploying MALIBU several times over a single day, data from multiple solar angles and also multiple observation angles can be obtained. This will significantly improve the accuracy of BRDF retrievals.

Deployment

Images of the MALIBU team from the first deployment in late June at the Pawnee National Grasslands, Colorado.

The first MALIBU test flights were conducted at the Pawnee National Grasslands from the 28th to the 30th of June, 2016. Three flights were co-located with NIST-traceable reflectance targets and supported by a ground survey crew for post-process geolocation. The final test flight, Flight #003, on 30 June, was successfully completed during a Landsat-8 OLI overpass. This effort helped establish the suitability of MALIBU and demonstrated the consistency and repeatability of the observations.

A video overview of the Pawnee deployment can be viewed here

.

[A] A true color composite collected by MALIBU's starboard viewing camera during its maiden flight at Pawnee site. The image shows a distinct backscattering 'hotspot' region (red circle), where sun exposure is highest. Notice also the location of two calibration tarps, that were used to ensure instrument pointing accuracy and stability. [B] Angular distribution of spectral bidirectional reflectance factors (BRFs) collected over a sample grassland field plot at the Pawnee site (40.7693, -104.6431). By analyzing the relative magnitude of the 'darkspot' to the 'hotspot' reflectance values for each MALIBU pixel (here at view zenith angles -45 and 45), we can extract information about the directional structure of this semi-arid landscape. Moreover, both in-situ and model-based estimates of key biophysical parameters (LAI, FPAR, PRI, and VI can be estimated with high accuracy from MALIBU BRFs. [C] Corresponding spectral BRDF for the same field plot. These observations can be integrated for all view-illumination conditions to estimate narrowband and broadband albedos for each MALIBU pixel.